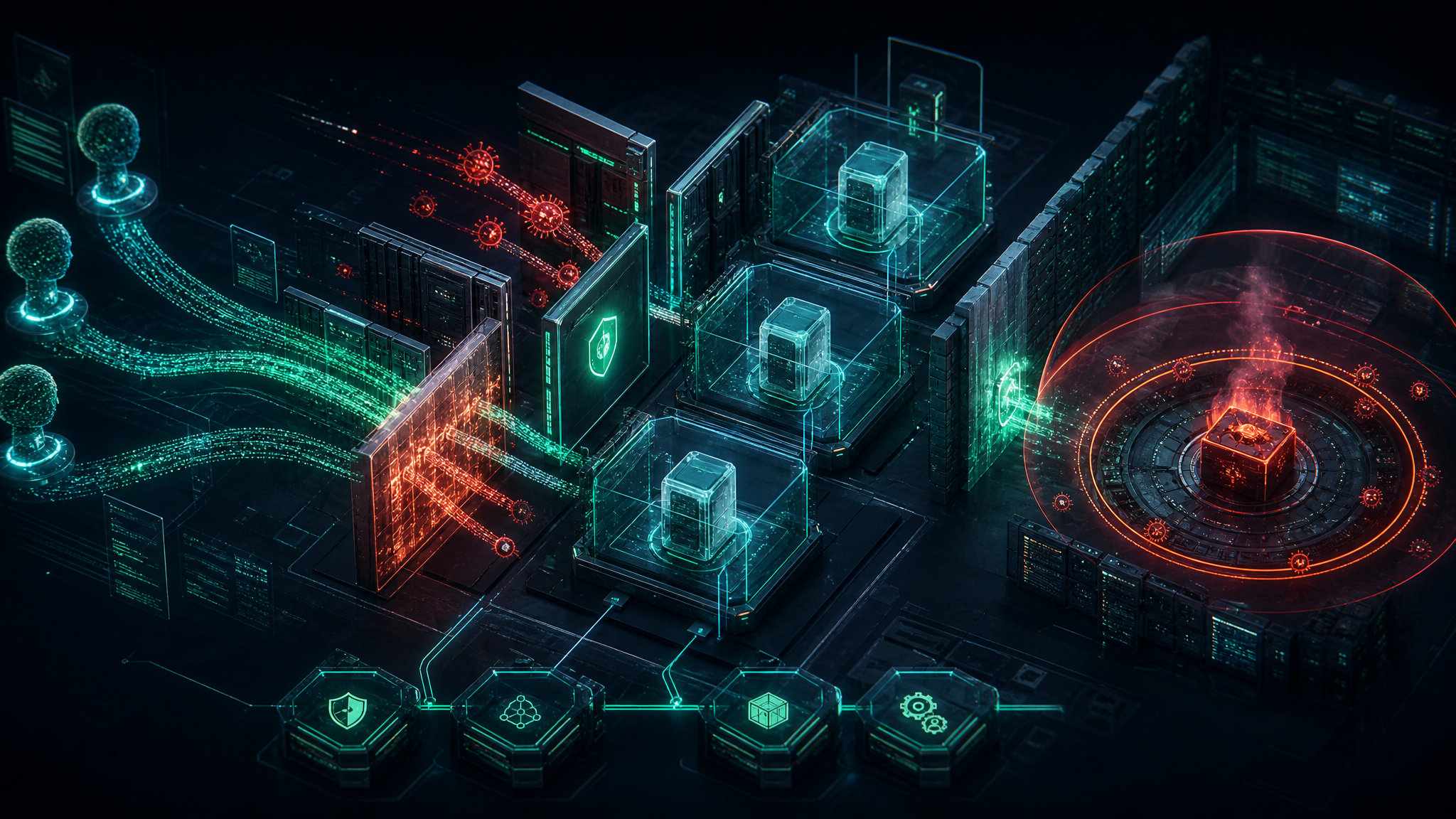

Microsoft’s latest AI security research is a useful warning for any organization experimenting with tool-connected agents: prompt injection is no longer just a chatbot problem. Once an AI agent can call plugins, run code, retrieve files, or move data between systems, a hostile prompt can become a path to real execution if the framework trusts model-controlled parameters.

The research focuses on two vulnerabilities Microsoft found and patched in Semantic Kernel: CVE-2026-26030, a Python issue where model-controlled search parameters could reach an unsafe evaluation path in an in-memory vector store filter, and CVE-2026-25592, a .NET issue where an exposed file-transfer function could let an agent write files to unsafe host paths. In both cases, the important lesson is bigger than one framework: the model was not “hacked” in the traditional sense. The surrounding agent architecture gave natural-language input a route into dangerous operations.

Why this matters

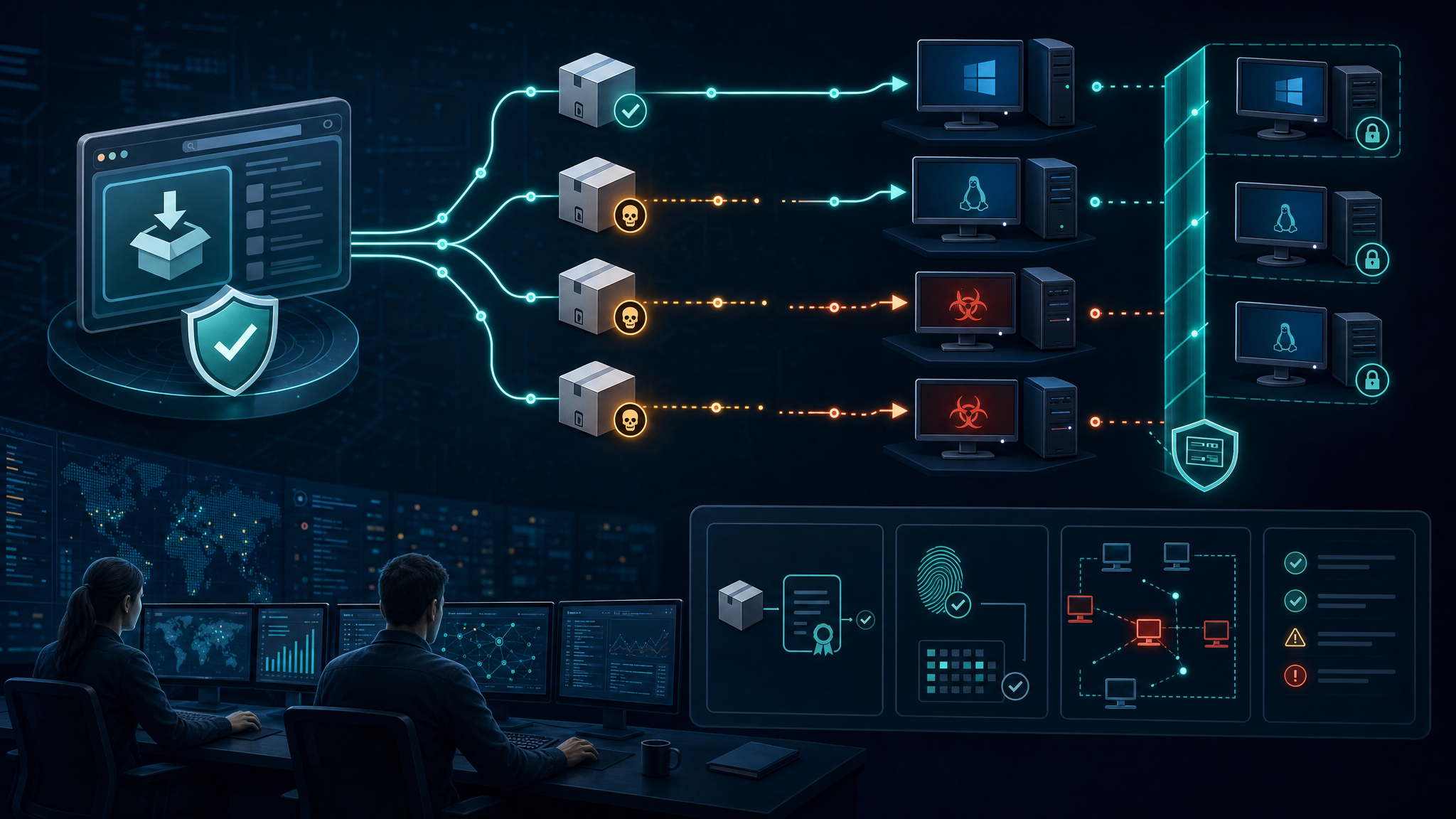

For SMBs, startups, and government contractors building AI-assisted workflows, agent frameworks are becoming the new glue layer between internal data, cloud APIs, ticketing systems, repositories, scripts, and business processes. That convenience creates a new attack surface. If the agent can touch a file path, shell command, database query, ticketing action, cloud account, or identity provider, defenders should assume an attacker will eventually try to influence those tool calls through a prompt injection path.

This is especially relevant for organizations adding AI features quickly: internal copilots, SOC assistants, proposal-writing agents, RAG applications, customer-support agents, and workflow automation bots often start as “low risk” experiments. But when those experiments get connected to production data or host-level tooling, prompt injection can cross from content manipulation into execution, data theft, or persistence.

What Microsoft reported

Microsoft’s post describes two Semantic Kernel vulnerability classes:

- Prompt-to-code execution through unsafe filtering. In the vulnerable Python path, an attacker-controlled value could influence a filter expression used by an in-memory vector store-backed search plugin. Under the right conditions, that could lead to remote code execution on the system hosting the agent.

- Sandbox boundary failure through exposed file-transfer tools. In the vulnerable .NET path, a helper function intended for programmatic file movement was exposed as a callable AI tool. That gave the model influence over host-side file paths, creating an arbitrary file write primitive.

Microsoft says the issues have been patched. Semantic Kernel Python users should upgrade to semantic-kernel 1.39.4 or later for CVE-2026-26030. Semantic Kernel .NET SDK users should upgrade to 1.71.0 or later for CVE-2026-25592.

Defensive takeaways

- Inventory AI agents like applications, not experiments. Track which agents exist, what framework and version they use, what tools they can call, what secrets they can access, and which hosts or cloud roles they run under.

- Treat every model-controlled tool parameter as untrusted input. Validate file paths, command arguments, URLs, search filters, database selectors, and workflow IDs the same way you would validate web application input.

- Use allowlists over blocklists. Blocklists fail when attackers find alternate syntax or object traversal paths. Prefer strict schemas, canonicalized paths, constrained enums, safe AST node allowlists, and narrowly scoped function exposure.

- Do not expose helper functions to the model by accident. Review annotations/decorators/metadata that publish functions as agent tools. If a function moves files, executes code, reads secrets, sends messages, or changes state, it deserves a second review.

- Separate agent runtime identity from human/admin identity. Agents should run with least-privilege service accounts, scoped tokens, and segmented access. A compromised agent host should not become a domain-wide incident.

- Log at both layers. Keep prompt/tool-call telemetry and endpoint telemetry. If an agent process suddenly spawns a shell, writes to startup folders, reads SSH keys, or makes unusual outbound connections, EDR should light up.

Bulwark Black assessment

The practical shift is this: agent tools define blast radius. The model is not the security boundary. The framework, tool schema, runtime identity, host isolation, and monitoring controls are the boundary.

Organizations adopting agentic AI should review every connected tool and ask: “If a malicious prompt controlled this parameter, what could it do?” If the answer includes command execution, arbitrary file access, cloud changes, data export, email sending, ticket closure, or identity changes, that tool needs stronger validation, isolation, and audit coverage before it belongs in production.

Original source: Microsoft Security Blog — “When prompts become shells: RCE vulnerabilities in AI agent frameworks”.