A malicious Hugging Face repository impersonating OpenAI’s “Privacy Filter” project is a useful reminder that AI supply chain risk is no longer theoretical. According to reporting from BleepingComputer and technical analysis from HiddenLayer, the fake repository briefly trended on Hugging Face while distributing a Windows infostealer through a Python loader and batch-file execution chain.

What Happened

The repository, named Open-OSS/privacy-filter, copied OpenAI branding and model-card language closely enough to look legitimate at a glance. The lure was simple: developers and AI users were instructed to clone the project and run local files such as loader.py or start.bat. HiddenLayer found that the loader contained decoy ML-style code, but also fetched a remote JSON payload and executed a hidden PowerShell command on Windows systems.

That PowerShell stage downloaded a batch file, attempted privilege escalation, added Microsoft Defender exclusions, launched a Rust-based infostealer, and exfiltrated browser data, Discord tokens, SSH/FTP/VPN credentials, wallet data, local sensitive files, system information, and screenshots. The campaign also used signs of artificial popularity, including inflated download and engagement metrics, to make the fake repository look safer than it was.

Why This Matters for SMBs and Gov Contractors

Most small organizations do not think of AI model repositories as software supply chain risk, but they should. Hugging Face, GitHub, npm, PyPI, container registries, browser extensions, and AI agent tool marketplaces are all part of the same trust problem: employees are downloading executable code from ecosystems where reputation can be faked, names can be typosquatted, and “open source” can be used as a social engineering wrapper.

For government contractors, the risk is even sharper. A single developer workstation may hold VPN profiles, cloud credentials, SSH keys, proposal materials, CUI-adjacent documents, password-manager sessions, and browser tokens for Microsoft 365, Google Workspace, GitHub, AWS, Azure, or government portals. If an infostealer gets those tokens, MFA may not save you because session cookies can often bypass the normal login flow.

Defensive Takeaways

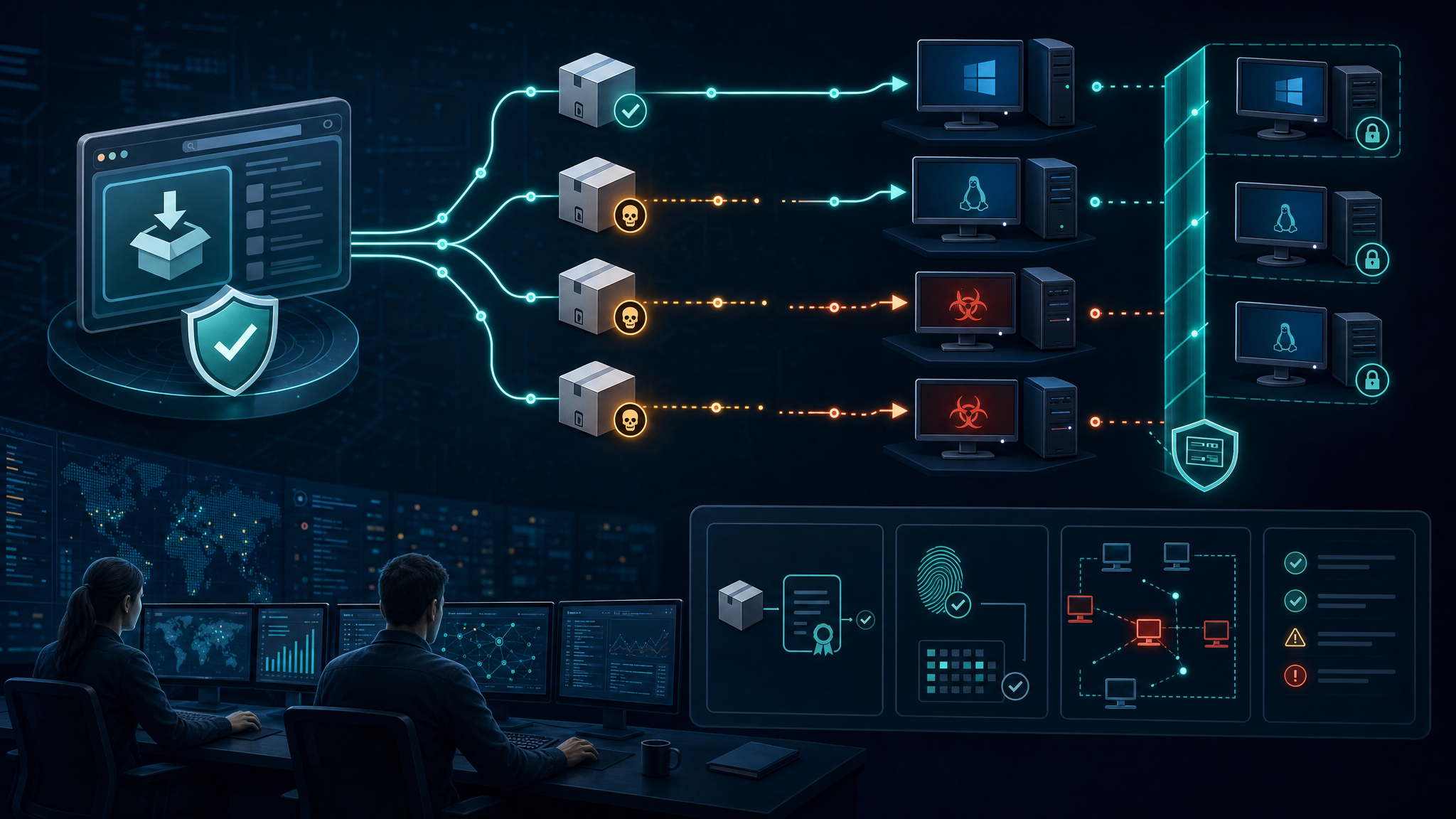

- Treat AI repos as executable code, not content. Model demos, loaders, notebooks, and “helper” scripts should go through the same review process as any other third-party software.

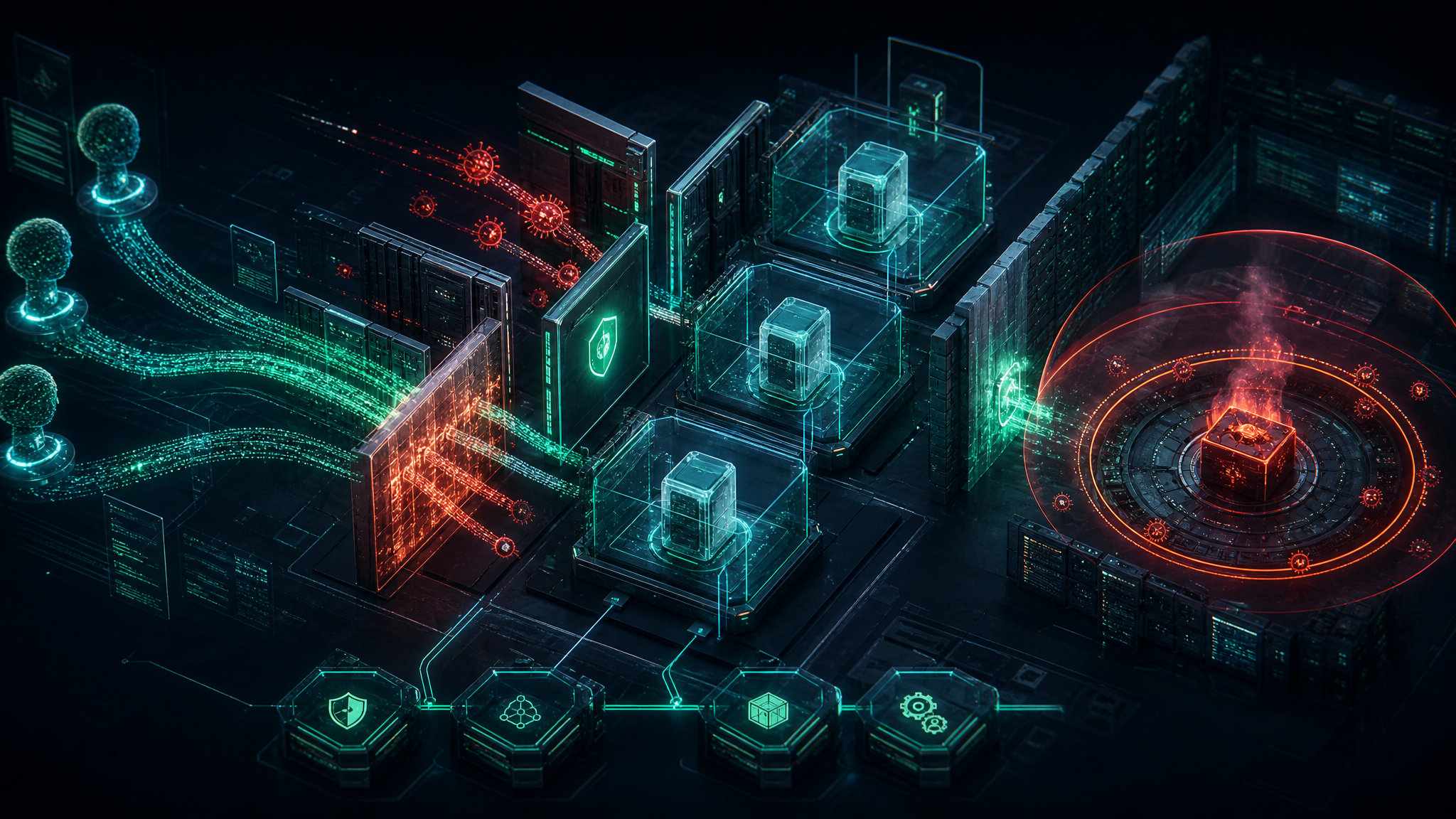

- Run unknown models in disposable environments. Use isolated VMs, containers, or cloud sandboxes with no access to production credentials, browser profiles, SSH keys, wallet data, or shared drives.

- Block credential sprawl on workstations. Reduce saved browser passwords, avoid long-lived cloud tokens on endpoints, and separate admin accounts from day-to-day browsing and experimentation.

- Monitor for suspicious script chains. Alert on Python launching PowerShell, PowerShell downloading batch files, Defender exclusions being added, and scheduled tasks impersonating browser or Microsoft update names.

- Verify source authenticity. Check publisher identity, repository history, commit age, linked official announcements, package checksums, and whether popularity appears organic.

- Have an infostealer playbook. Cleanup is not enough. Assume browser sessions, tokens, SSH keys, wallet seeds, VPN configs, and SaaS sessions are compromised until rotated or invalidated.

Bulwark Black Assessment

This is exactly the kind of attack that will keep growing as AI adoption spreads through engineering, security, analytics, and operations teams. Attackers do not need to exploit a zero-day if they can get a trusted user to run a fake model loader on a machine full of credentials. The “AI” label lowers skepticism, and trending metrics create a false sense of safety.

The practical answer is not to ban AI research tools outright. The answer is to make experimentation safe by default: isolated environments, least-privilege tokens, endpoint controls that catch script abuse, and a policy that treats copied commands from model cards the same way we treat random shell scripts from the internet.

Source: BleepingComputer — Fake OpenAI repository on Hugging Face pushes infostealer malware. Technical details and IOCs: HiddenLayer research.