A critical vulnerability in Langflow, the popular open-source visual framework for building AI agents and RAG pipelines, was weaponized by threat actors within just 20 hours of public disclosure—before any proof-of-concept code was publicly available.

The Vulnerability

Tracked as CVE-2026-33017 (CVSS 9.3), the vulnerability is an unauthenticated remote code execution (RCE) flaw affecting the /api/v1/build_public_tmp/{flow_id}/flow endpoint in Langflow versions 1.8.1 and earlier.

The endpoint, designed to allow unauthenticated users to build public flows, accepts attacker-supplied flow data containing arbitrary Python code in node definitions. This code is then passed directly to Python’s exec() function with zero sandboxing, enabling full server compromise with a single HTTP request.

“Exploiting CVE-2026-33017 is extremely easy,” explained security researcher Aviral Srivastava, who discovered the flaw. “One HTTP POST request with malicious Python code in the JSON payload is enough to achieve immediate remote code execution.”

Attack Timeline: From Advisory to Exploitation in 20 Hours

According to Sysdig’s Threat Research Team, the exploitation timeline was alarming:

- March 17, 20:05 UTC — Advisory published on GitHub

- March 18, 16:04 UTC — First exploitation attempt observed

- March 18, 16:39 UTC — Sustained scanning begins across multiple nodes

- March 18, 20:55 UTC — Attackers progress to environment variable exfiltration

“No public proof-of-concept (PoC) code existed at the time,” Sysdig reported. “Attackers built working exploits directly from the advisory description and began scanning the internet for vulnerable instances.”

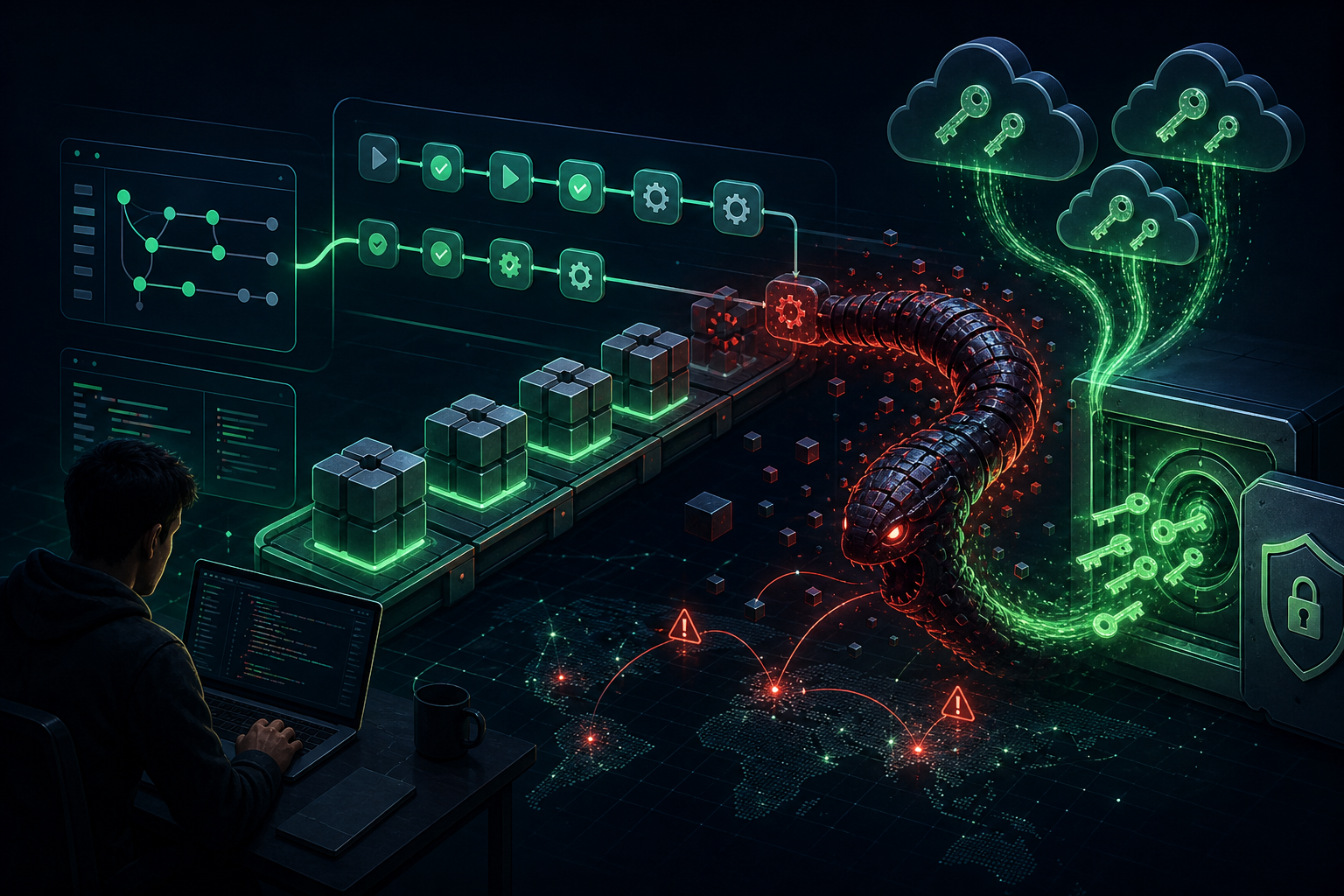

Three Phases of Attack

Phase 1: Automated Scanning

Within hours of disclosure, attackers deployed nuclei scanning templates to identify vulnerable instances. Scanning traffic came from multiple VPS providers across Germany, Singapore, and other regions. The attackers used interactsh callback servers to validate successful exploitation.

Phase 2: Custom Exploit Scripts

More sophisticated attackers moved beyond validation, executing a methodical kill chain:

- Directory listing and credential files enumeration

- System fingerprinting via

idcommand - Stage-2 payload delivery via curl from pre-staged infrastructure

Phase 3: Data Harvesting

The most advanced attackers conducted thorough credential harvesting, dumping environment variables, enumerating configuration files and databases, and extracting .env files containing API keys for OpenAI, Anthropic, AWS, and database credentials.

Why AI Workloads Are Prime Targets

Langflow’s 145,000+ GitHub stars translate to a massive attack surface. Several factors made CVE-2026-33017 particularly attractive:

- No authentication required — The vulnerable endpoint is publicly accessible by design

- Simple exploitation — Single HTTP POST with JSON payload; no multi-step chains

- High-value targets — Langflow instances contain API keys and database connections

- Supply chain compromise potential — Access to cloud accounts and data stores

The Shrinking Patch Window

This incident aligns with an accelerating trend. According to Rapid7’s 2026 Global Threat Landscape Report, the median time from vulnerability publication to inclusion in CISA’s Known Exploited Vulnerabilities catalog dropped from 8.5 days to just 5 days over the past year.

Meanwhile, the median time for organizations to deploy patches is approximately 20 days. This gap means defenders remain exposed while attackers weaponize vulnerabilities within hours.

Recommended Actions

- Update to Langflow version 1.9.0 or later immediately

- Audit environment variables and secrets on any exposed Langflow instances

- Rotate API keys and database passwords as a precautionary measure

- Monitor for outbound connections to unusual callback services

- Restrict network access using firewall rules or a reverse proxy with authentication

The Bottom Line

CVE-2026-33017 demonstrates that critical vulnerabilities in AI infrastructure are weaponized within hours of disclosure—often before public PoC code exists. Organizations running AI pipelines must reconsider their vulnerability management programs to match this new reality.

Source: The Hacker News | Sysdig Threat Research