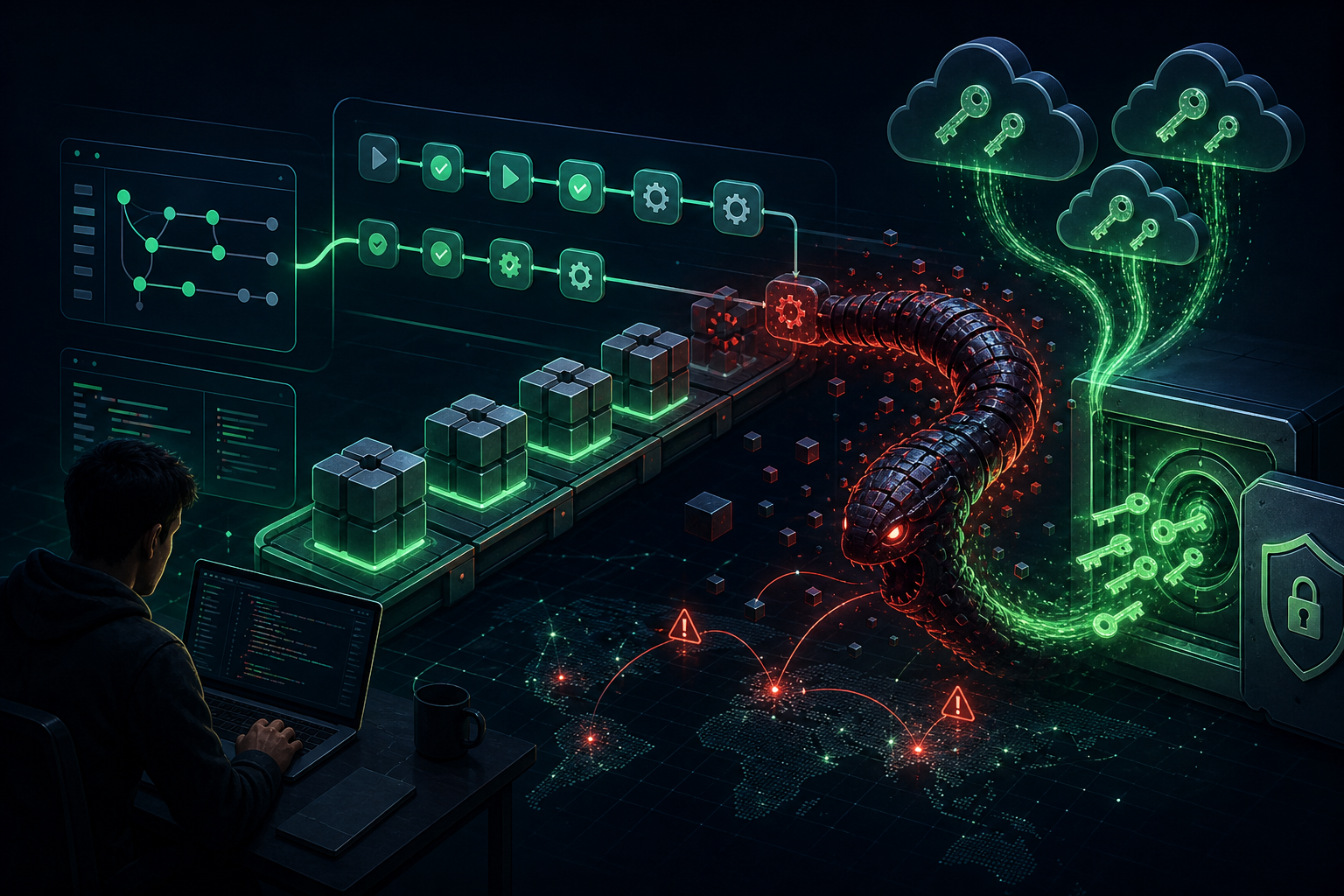

Pakistan-aligned threat actor Transparent Tribe (APT36) has embraced AI-assisted malware development to flood Indian government networks with disposable, polyglot implants—a technique security researchers are calling “vibeware” or Distributed Denial of Detection (DDoD).

AI-Powered Malware Industrialization

According to Bitdefender’s research, APT36 has shifted from sophisticated, handcrafted implants to high-volume, AI-generated malware written in obscure programming languages including Nim, Zig, and Crystal. This approach prioritizes quantity over quality, overwhelming defensive telemetry with a constant stream of unique binary signatures.

“We are seeing a transition toward AI-assisted malware industrialization that allows the actor to flood target environments with disposable, polyglot binaries,” the researchers noted. While the resulting tools are often unstable and contain logical errors, the sheer volume compensates for individual implant deficiencies.

Primary Targets: Indian Government and Embassies

The campaign specifically targets:

- Indian government agencies – Primary focus of the espionage operation

- Indian embassies – Targeting diplomatic missions in multiple foreign countries

- Afghan government entities – Secondary targeting with reduced intensity

- Private sector organizations – Limited but ongoing reconnaissance

APT36 operators use LinkedIn for initial reconnaissance, identifying high-value targets before launching phishing campaigns.

Infection Chain: Phishing to Backdoor

The attack typically begins with:

- Phishing emails containing Windows shortcuts (LNKs) bundled in ZIP archives or ISO images

- Alternatively, PDF lures with prominent “Download Document” buttons redirecting to attacker-controlled sites

- PowerShell scripts executed in memory to download and run the main backdoor

- Deployment of Cobalt Strike and Havoc adversary simulation frameworks for post-compromise persistence

Arsenal of AI-Generated Malware

The campaign deploys an extensive toolkit, much of it bearing telltale signs of AI-assisted development (including Unicode emojis in code):

Loaders and Droppers

- Warcode – Crystal-based shellcode loader that reflectively loads Havoc agents into memory

- NimShellcodeLoader – Nim-based loader deploying Cobalt Strike beacons

- CreepDropper – .NET malware delivering additional payloads

- ZigLoader – Zig-based loader for in-memory shellcode execution

Backdoors and C2

- SupaServ – Rust backdoor using Supabase for C2 with Firebase fallback

- CrystalShell – Cross-platform (Windows/Linux/macOS) backdoor using Discord channel IDs for C2

- ZigShell – Zig counterpart to CrystalShell, using Slack infrastructure

- Gate Sentinel Beacon – Customized open-source C2 framework

Information Stealers

- LuminousStealer – Rust-based file exfiltrator targeting documents, images, and archives via Firebase/Google Drive

- LuminousCookies – Browser credential stealer bypassing Chromium’s app-bound encryption

- SHEETCREEP – Go-based infostealer using Microsoft Graph API

- MAILCREEP – C# backdoor using Google Sheets for C2

- BackupSpy – Utility monitoring local filesystem and external media for sensitive data

Living Off Trusted Services

A key characteristic of this campaign is the abuse of legitimate cloud services for command-and-control:

- Slack – C2 communication via channel webhooks

- Discord – Hardcoded channel IDs for backdoor control

- Google Sheets – Data exfiltration and command retrieval

- Supabase/Firebase – Backend-as-a-Service platforms for C2 infrastructure

- Google Drive – File exfiltration endpoint

This technique allows malicious traffic to blend with legitimate network activity, complicating detection efforts.

Why AI-Generated Malware Matters

Bitdefender characterizes APT36’s approach as a “technical regression”—the AI-assisted tools are often buggy and unstable. However, the strategy reflects a fundamental shift in threat actor economics:

“We are seeing a convergence of two trends: the adoption of exotic, niche programming languages, and the abuse of trusted services to hide in legitimate network traffic. This combination allows even mediocre code to achieve high operational success by simply overwhelming standard defensive telemetry.”

Large language models (LLMs) have collapsed the expertise gap, enabling threat actors to generate functional code in unfamiliar languages by porting core logic from more common ones—or creating it from scratch with minimal programming knowledge.

Recommendations

Organizations should:

- Implement behavioral detection beyond signature-based approaches

- Monitor for unusual cloud service API activity (Slack, Discord, Google services)

- Train employees on LinkedIn-based social engineering reconnaissance

- Block or monitor ISO/LNK file execution from external sources

- Deploy application allowlisting to prevent execution of uncommon language runtimes

Source: The Hacker News / Bitdefender