Cybersecurity researchers at Noma Labs have disclosed details of a critical vulnerability in Ask Gordon, Docker’s AI assistant integrated into Docker Desktop and the Docker CLI. The flaw, codenamed DockerDash, could have been exploited to execute arbitrary code and exfiltrate sensitive data from compromised environments.

Docker addressed the vulnerability in version 4.50.0, released in November 2025. Organizations running older versions should upgrade immediately.

Attack Mechanism: Weaponizing Image Metadata

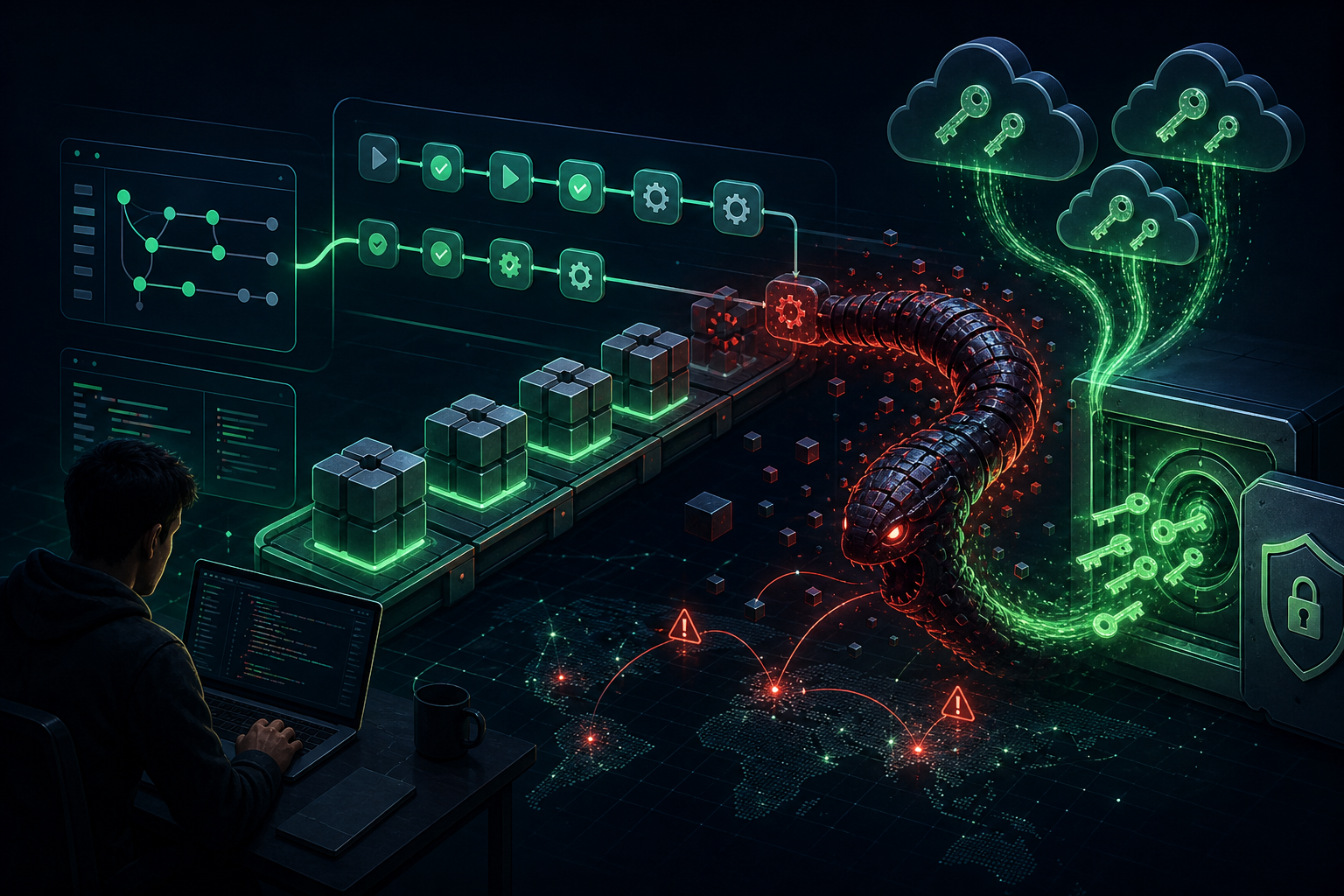

The DockerDash vulnerability exploits a critical trust boundary violation in how Ask Gordon parses container metadata. Attackers can embed malicious instructions within Dockerfile LABEL fields—typically used for innocuous metadata descriptions—which become injection vectors when processed by the AI assistant.

“In DockerDash, a single malicious metadata label in a Docker image can be used to compromise your Docker environment through a simple three-stage attack,” explained Sasi Levi, security research lead at Noma. “Every stage happens with zero validation, taking advantage of current agents and MCP Gateway architecture.”

The Three-Stage Attack Chain

- Injection: Attacker publishes a Docker image containing weaponized LABEL instructions in the Dockerfile

- Processing: When a victim queries Ask Gordon AI about the image, Gordon reads the metadata, failing to differentiate between legitimate descriptions and embedded malicious instructions

- Execution: Ask Gordon forwards the parsed instructions to the MCP (Model Context Protocol) Gateway, which interprets them as standard requests from a trusted source and invokes MCP tools without validation

AI Supply Chain Risk: A Growing Concern

The vulnerability has been characterized as a case of Meta-Context Injection—a new class of attacks where AI assistants treat unverified metadata as executable commands. The MCP Gateway, which serves as connective tissue between large language models and local environments, cannot distinguish between informational metadata and pre-authorized runnable instructions.

“By embedding malicious instructions in these metadata fields, an attacker can hijack the AI’s reasoning process,” Levi noted.

Impact: Code Execution and Data Exfiltration

Code Execution (Critical): MCP tools execute attacker-supplied commands with the victim’s Docker privileges, achieving full remote code execution in cloud and CLI environments.

Data Exfiltration (High): A variant attack targets Ask Gordon’s Desktop implementation to capture sensitive environment data, including:

- Installed tools and container details

- Docker configuration

- Mounted directories

- Network topology

Remediation

Organizations using Docker Desktop or the Docker CLI with Ask Gordon AI should:

- Upgrade immediately to Docker Desktop 4.50.0 or later

- Review container sources and only pull images from trusted registries

- Implement image scanning to detect potentially malicious metadata before deployment

- Treat AI supply chain risk as a core security concern moving forward

The Bigger Picture

DockerDash underscores the emerging risks of AI integration in developer tooling. As AI assistants become more deeply embedded in development workflows, their ability to interpret and act on contextual data creates new attack surfaces.

“The DockerDash vulnerability underscores your need to treat AI Supply Chain Risk as a current core threat,” Levi concluded. “Mitigating this new class of attacks requires implementing zero-trust validation on all contextual data provided to the AI model.”

Source: The Hacker News