In a stark demonstration of how artificial intelligence is transforming the cybersecurity threat landscape, the Sysdig Threat Research Team (TRT) has documented a sophisticated cloud intrusion where attackers achieved full administrative control of an AWS environment in less than 10 minutes — with strong evidence that large language models (LLMs) were used to automate the entire attack chain.

The incident, observed on November 28, 2025, represents a significant escalation in the speed and sophistication of cloud attacks, highlighting the urgent need for organizations to implement automated defenses capable of matching the pace of AI-driven offensive operations.

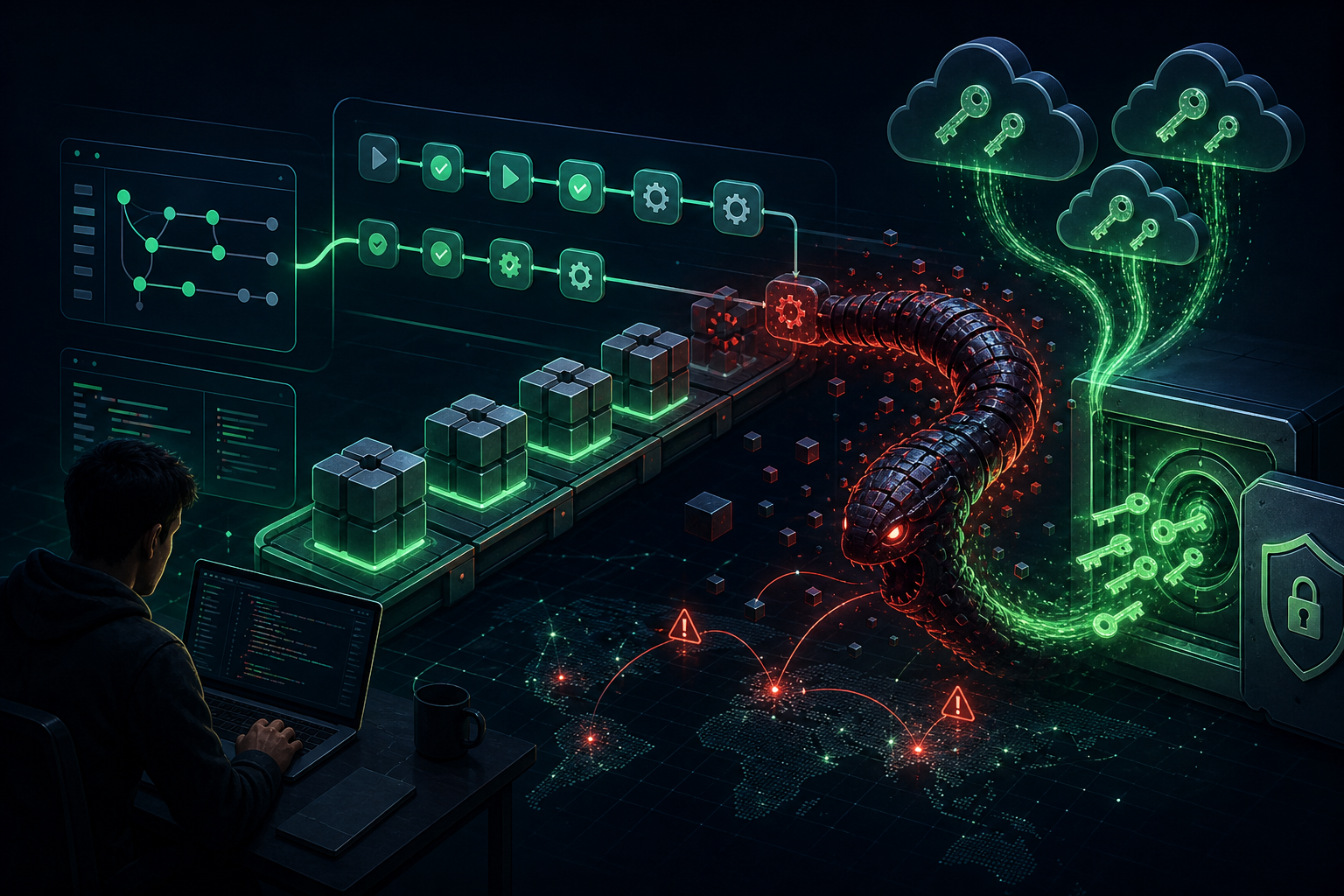

The Attack Chain: From Exposed Credentials to Full Compromise

Initial Access: Hunting AI Infrastructure

The attack began when threat actors discovered valid credentials stored in public S3 buckets. Notably, these buckets were named using common AI terminology — suggesting the attackers were specifically targeting organizations with AI/ML infrastructure. The compromised credentials belonged to an IAM user with permissions on AWS Lambda and Amazon Bedrock, likely created to automate AI tasks.

Privilege Escalation: Lambda Code Injection

After initial reconnaissance across multiple AWS services (Secrets Manager, SSM, EC2, ECS, RDS, and more), the attackers exploited Lambda permissions to escalate privileges. They modified an existing Lambda function called EC2-init, injecting Python code that:

- Listed all IAM users and their access keys

- Created new administrative access keys for a privileged user

- Enumerated S3 buckets and their contents

The malicious code contained comments written in Serbian, potentially indicating the threat actor’s origin. More significantly, the code’s structure — including comprehensive exception handling and the speed of iteration — strongly suggests it was generated by an LLM.

Evidence of AI Hallucinations

Multiple indicators point to LLM-assisted attack automation:

- Hallucinated AWS account IDs: The attackers attempted to assume roles in account IDs like

123456789012and210987654321— sequential test numbers that don’t exist in production environments - Non-existent GitHub repositories: Scripts referenced

https://github.com/anthropic/training-scripts.git, a repository that doesn’t exist — a classic LLM hallucination - Session naming: Role assumption attempts used revealing session names like “explore,” “pwned,” “escalation,” and notably “claude-session”

LLMjacking: Stealing AI Compute

With administrative access secured, the attackers pivoted to what Sysdig calls “LLMjacking” — abusing Amazon Bedrock to invoke multiple AI models at the victim’s expense. They invoked high-end models including:

- Claude Sonnet 4 and Claude Opus 4

- Claude 3.5 Sonnet and Claude 3 Haiku

- DeepSeek R1

- Llama 4 Scout

- Amazon Nova Premier and Titan Image Generator

The attackers also created a Terraform module designed to deploy a backdoor Lambda function that would generate Bedrock credentials via a publicly accessible URL — requiring no authentication.

GPU Instance Hijacking: $23,600/Month at Stake

The threat actors attempted to launch p5.48xlarge GPU instances (named “stevan-gpu-monster”) for apparent model training. When these failed due to capacity constraints, they successfully launched a p4d.24xlarge instance — which would cost approximately $23,600 per month if left running.

A JupyterLab server was configured to start automatically, providing persistent backdoor access to expensive compute resources even if AWS credentials were revoked.

Critical Defensive Recommendations

Sysdig TRT recommends these essential mitigations:

1. Eliminate Long-Term Credentials

Avoid IAM users with static access keys. Use IAM roles with temporary credentials whenever possible. If long-term credentials are required, implement strict rotation policies.

2. Secure AI-Related Infrastructure

S3 buckets containing AI data, models, or code should never be publicly accessible. Attackers are actively hunting for AI-related resources.

3. Apply Least Privilege to Lambda

Developers should not have UpdateFunctionCode permissions unless absolutely necessary. This permission enables privilege escalation through code injection.

4. Monitor Bedrock Usage

Create alerts for invocation of AI models not normally used by your organization. Consider implementing Service Control Policies (SCPs) to restrict which models can be invoked.

5. Implement Real-Time Cloud Detection

With attackers achieving admin access in under 10 minutes, organizations must deploy automated detection capable of identifying and responding to suspicious activity in real-time.

The Bottom Line

This incident signals a fundamental shift in cloud security. By automating the attack chain with AI, threat actors have dramatically reduced “dwell time” — the window defenders have to detect and respond. Organizations must prioritize automated, real-time detection to match this new pace of exploitation.

The era of “offensive AI” agents that can execute complex intrusions faster than human defenders can react is no longer theoretical — it’s here.

Source: Sysdig Threat Research Team