Security researchers at Check Point have uncovered a concerning new attack vector: threat actors can abuse AI assistants like Microsoft Copilot and xAI’s Grok to create covert command-and-control (C2) communication channels that evade traditional security tools.

The proof-of-concept demonstrates how attackers can leverage AI services with web browsing capabilities to relay commands between malicious infrastructure and compromised systems—all while appearing as legitimate traffic to security solutions.

How the Attack Works

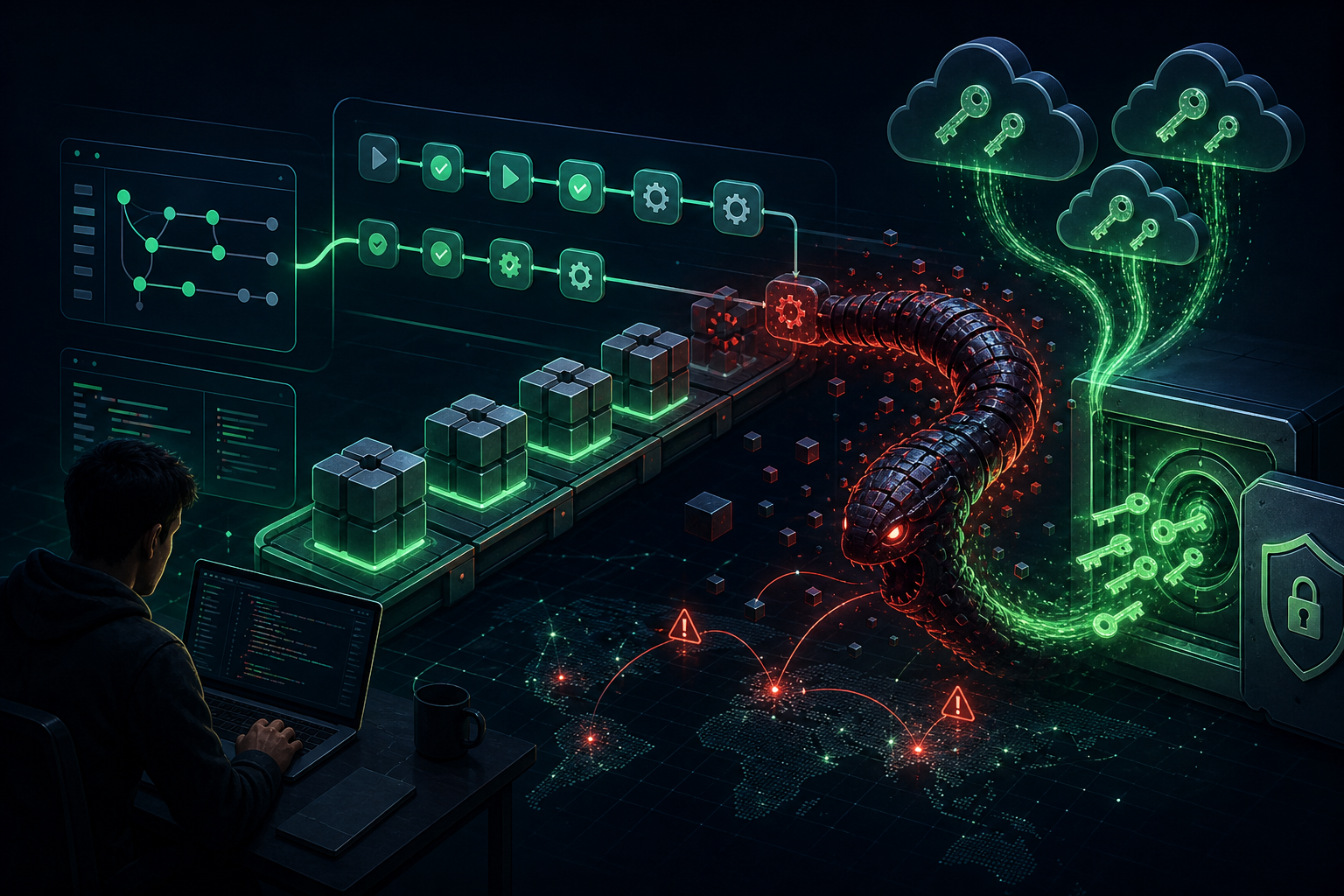

Instead of malware connecting directly to attacker-controlled C2 servers—which security tools are designed to detect—Check Point’s researchers created a method where malware communicates through AI web interfaces.

The attack uses the WebView2 component in Windows 11 to interact with AI assistants. The researchers note that even if WebView2 is missing on a target system, attackers can embed it within the malware payload.

The attack flow:

- Malware opens a WebView pointing to Copilot or Grok

- It submits instructions that include commands to fetch attacker-controlled URLs

- The AI assistant retrieves the content and returns it in its response

- Malware parses the AI’s response to extract commands or stolen data

This creates a bidirectional communication channel that leverages the AI service’s trusted status with internet security tools.

Why This Attack Is Particularly Dangerous

What makes this technique especially concerning is the difficulty of blocking it:

- No API keys to revoke – The attack works through direct web interaction

- No accounts to block – Anonymous usage is allowed on many AI platforms

- Trusted traffic – AI service domains are typically whitelisted

- Encryption bypass – Data can be encrypted into high-entropy blobs to evade content inspection

“The usual downside for attackers [abusing legitimate services for C2] is how easily these channels can be shut down: block the account, revoke the API key, suspend the tenant,” Check Point explains. “Directly interacting with an AI agent through a web page changes this.”

Beyond C2: Additional AI Abuse Scenarios

Check Point warns that C2 proxy abuse is just one of multiple ways threat actors can weaponize AI services. Other potential abuses include:

- Operational reasoning – Using AI to assess whether a target system is worth exploiting

- Evasion planning – Leveraging AI to determine how to proceed without raising alarms

- Attack optimization – Having AI analyze and improve attack methodologies

Vendor Response and Mitigation

Check Point disclosed their findings to both Microsoft and xAI. While safeguards exist on these platforms to block obviously malicious exchanges, researchers demonstrated that encrypting data effectively bypasses these safety checks.

Recommended mitigations:

- Monitor for unusual WebView2 activity in processes

- Implement network monitoring for AI service interactions from unexpected applications

- Consider restricting AI service access from sensitive systems

- Deploy behavioral analysis tools that can detect C2-like communication patterns

As AI assistants become more prevalent in enterprise environments, security teams must consider these platforms as potential attack vectors—not just productivity tools.